Meet the first ever AI Evaluations Assistant

Artificial Intelligence makes it fast and easy to evaluate session submissions, support your evaluations team and deliver the highest quality event program.

Fast, Fair, and Frictionless Session Selection

Get structured, reasoned, and transparent evaluations that help your prioritize the highest-impact sessions for your agenda quickly.

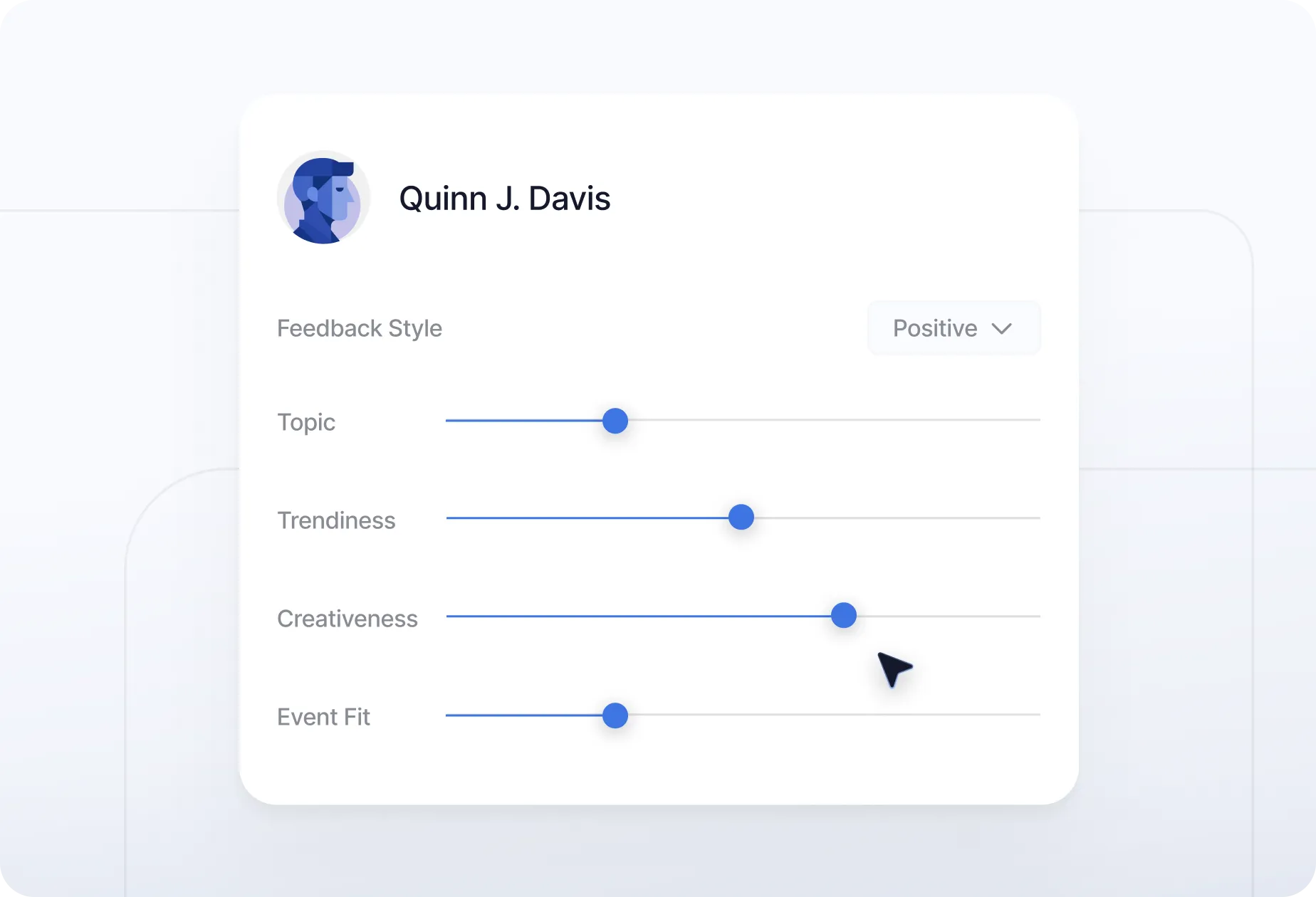

Build Reviewer Personas

Define exactly who reviews your sessions — powered by AI, shaped by your priorities. Create tailored evaluators that deliver feedback aligned with your event’s goals.

- Customize names, professions, tone, and areas of expertise.

- Generate realistic, on-brand feedback for every submission.

- Fine-tune personality and focus in just a few clicks.

- Replace generic AI bots with personas modeled after real reviewers.

Customize Persona Expertise

You decide how your AI personas think. From a Product Strategist analyzing tech sessions to an Executive Director evaluating leadership talks — every insight is tailored to your goals.

- Apply weighted scoring for originality, expertise, and audience value.

- Customize how each persona prioritizes and evaluates sessions.

- Scale consistent, data-driven feedback across every track.

AI-Generated Feedback & Scores

Your AI personas deliver in-depth evaluations that go beyond surface-level reviews. Each submission is analyzed, compared to historical data, and scored with context — so every decision feels objective and well-founded.

- Get a numeric score supported by clear reasoning and contextual notes.

- Compare performance against past speakers and event benchmarks.

- Receive feedback written in your personas’ tone and expertise, ensuring consistency and depth across evaluations.

Your Privacy, Our Priority

With AICPA SOC certification and GDPR compliance, Sessionboard is built on trust, transparency, and the highest data security standards.

The First AI-Powered Speaker Review Assistant

Eliminate the manual burden of reviewing hundreds or thousands of session submissions and speakers. Scale the evaluation of your call for content submissions today.

Frequently Asked Questions

Built to scale with you—whether you're hosting one event or a hundred. No upgrades needed to unlock the essentials.

Find detailed answers regarding the operation, scalability, and management of your awards program.

AI-powered abstract review uses artificial intelligence to evaluate session or paper submissions against predefined criteria — such as originality, relevance, speaker expertise, and audience value — and automatically generate scored feedback. Sessionboard's AI Evaluators let you define custom reviewer personas with specific expertise profiles, tone, and weighted scoring rubrics. The AI reviews each submission in that persona's voice, producing numeric scores supported by written reasoning. This helps event teams process large volumes of submissions faster while maintaining consistent, bias-reduced evaluation quality.

AI Evaluators are designed to support your review team, not replace them. They excel at first-pass triage — scoring high volumes of submissions quickly, flagging outliers, and surfacing the most competitive abstracts for human attention. For final selection decisions, especially at academic conferences or awards programs where subject-matter nuance matters, human reviewers remain central. The most effective approach is to use AI to handle volume and have human reviewers apply final judgment — which is exactly how Sessionboard's review workflow is designed.

One of the biggest challenges in peer review is inconsistency — different reviewers apply different standards, leading to variable scores for similar submissions. Sessionboard's AI reviewer personas solve this by encoding your evaluation criteria into a stable, repeatable profile. You define the persona's name, professional background, expertise areas, tone, and scoring weights. Every abstract is then evaluated against the same standard, making cross-submission comparisons more meaningful and reducing the cognitive load on your human review committee. You can also create multiple personas for different tracks or session types.

Any event that receives more abstract or session submissions than its review team can efficiently process is a strong candidate for AI-assisted evaluation. This includes academic conferences managing hundreds of research paper submissions, professional association annual meetings, industry summits selecting competitive speakers, and awards programs evaluating large applicant pools. Sessionboard's AI Evaluators are particularly valuable for organizations running multiple events per year that need to maintain consistent review standards across different programs.

Sessionboard's AI evaluation framework is built around your criteria, not generic algorithmic preferences. By defining weighted scoring dimensions — such as originality, practical applicability, audience relevance, and speaker qualifications — you control what quality means for your event. All AI-generated scores are accompanied by written reasoning, making the evaluation process auditable and transparent. Human reviewers can override AI scores at any time, and all evaluation activity is logged for accountability. The platform is also compliant with the General Data Protection Regulation (GDPR) and certified under SOC 2 Type II.

Manual abstract review is time-consuming, inconsistent, and difficult to scale. Reviewers get fatigued, scoring standards drift, and coordinating a committee across email and spreadsheets creates significant administrative overhead. Sessionboard centralizes all submissions in a single dashboard, automatically assigns reviewers, tracks scoring progress in real time, and uses AI to support high-volume evaluation. The result is a measurable improvement over manual workflows where reviewer attrition and missed deadlines are common — program chairs can monitor completion in real time and send bulk reminders to reviewers with outstanding assignments, keeping review cycles on track.

Yes. Sessionboard is compliant with the General Data Protection Regulation (GDPR) and certified to SOC 2 Type II (System and Organization Controls) standards. Submission data processed by AI Evaluators is handled through encrypted channels and in accordance with Sessionboard's data protection policies. For organizations in regulated industries — including healthcare, finance, and academic institutions with institutional review requirements — Sessionboard's compliance certifications provide the assurance needed to adopt AI-assisted review with confidence.

See how real teams simplify speaker management, scale content operations, and run smoother events with Sessionboard.